Which came first: the chicken or the egg? Every time there’s a new Android release, I question if there’s some hidden meaning behind some of the new features. Did the Android team introduce a new feature in anticipation of a future trend, to standardize something that’s already in use, because OEMs asked for it, or solely because it’s needed to support a feature on the next Pixel phone? It’s tough to pin down exactly why Google introduces a new Android feature sometimes, because they’re often tight-lipped about certain changes and I’m not privy to the meetings where the planning all goes down. But when Google announced the Pixel 7 series the other day, I had an “aha!” moment because I could finally align the phones’ new software features with the Android 13 APIs that power them.

I’ve been writing about Android for years now, and more often than not, whenever I thought a new Android feature was designed for a Pixel … it usually was. But before yesterday’s announcement, all I could do was speculate. Today, though, I can finally talk about how some of the new Pixel 7 features work under-the-hood. I’ll also talk about some of the features Google didn’t introduce yesterday, as it’s likely they’re holding them off for a future Pixel Feature Drop or the upcoming Pixel Tablet launch next year.

Every Pixel 7 feature backed by Android 13 APIs

There are a lot of new software features introduced with the Pixel 7 series, including Photo Unblur, faster Night Sight, Cinematic Blur, new At a Glance alerts, a new Quick Phrase, voice message transcription in the Messages app, face unlock, Clear Calling, and more. I won’t be mentioning any of those features any further, because they don’t rely on new functionality introduced within Android 13. Those Pixel 7 features are either part of Google’s proprietary apps (some within GMS and some exclusive to Pixel) or use functionality introduced in earlier Android releases.

For the Pixel 7 features in the following list, I’ll provide a brief summary of how they work under-the-hood because I’ve already written about them extensively in either my Android 13 deep dive or a previous edition of Android Dessert Bites. If you want all the nitty gritty implementation details, I’ll provide a link to where you can learn more.

- Cough & snore detection

- The Pixel 7 adds a feature previously available on the Nest Hub: the ability to detect coughs and snores while you’re sleeping. Here’s how Google described the feature during the launch event:

- “And with Google Pixel 7, we’re using Protected Computing to keep your health and wellness data private. For example, with your permission, Digital Wellbeing can help you understand the quality of your sleep by analyzing audio from coughs and snores during the night. That audio data is never stored by or sent to Google. The processing happens right on your Pixel 7.” - Jen Fitzpatrick, SVP of Google Core Systems & Experiences.

- Under the hood, the Pixel 7’s cough and snore detection feature makes use of a new set of APIs in Android 13 called Ambient Context. Android 13 provides two sets of Ambient Context APIs: a client API and a provider API. Apps can implement the client API to receive data on AmbientContext events, which currently includes coughing and snoring. This data includes the start and end times of the events, a confidence level that the event is actually for a cough or snore, and the intensity level of the event. This data is provided by another app that implements the provider API. This way, only the app that implements the provider API needs to process the raw microphone data in order to determine whether a cough or a snore happens, so apps implementing the client API can access sleep-related data in a privacy-preserving manner. Only system apps can implement the client and provider API, though there’s a hint that Google will open up the client API to third-party apps in Android 14.

- On the Pixel 7, the Android System Intelligence app has a service that implements the Ambient Context provider API. As for the client API, there are currently two system apps that implement it: Digital Wellbeing and Google Clock. These two apps will surface cough and snore data in their respective “Bedtime” sections, according to a support page. However, the apps will first ask the user for permission to access the microphone. This is for better accountability, as the actual processing of microphone data is handled by Android System Intelligence, which doesn’t hold the Internet permission. For better efficiency, the Pixel 7 will likely deploy a nanoapp to the Tensor G2’s “always-on compute” (AoC) block, ie. its sensor hub, which runs continuously while sipping much less power than the main CPU. This is how car crash detection is run so efficiently on the Pixel.

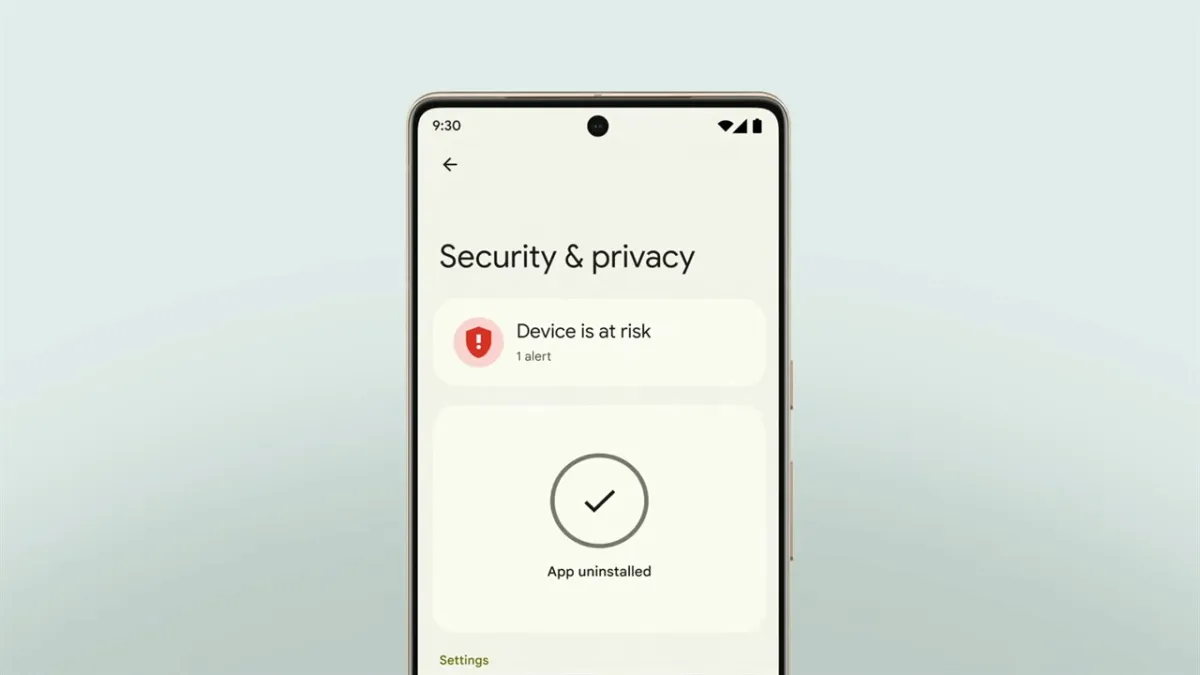

- Safety Center

- Android has a lot of security and privacy features, but users may not know that many of them even exist. To solve this, Google has been working on a new, unified settings page for all security and privacy features. This page is internally called the “Safety Center” but just appears on the device as “Security & privacy.” Here’s how Google described the feature during the Pixel 7 launch:

- “Of course, core to your safety is knowing that you’re in control. And with Android 13, we’re making it even easier, with privacy and security settings all in one place. This includes new action cards that notify you of any safety risks, and provide easy steps to enhance your privacy and security. This new experience will be available first on Pixel devices later this year, and other Android phones soon after.” - Jen Fitzpatrick, SVP of Google Core Systems & Experiences.

- This feature was first teased at Google I/O 2022, where Google also unveiled the new “Protected by Android” branding. The Safety Center is part of the system app called “Permission Controller”, which is part of the Project Mainline module of the same name. The resources for the “Protected by Android” branding as contained within the “Safety Center Resources” system app, which is also part of the “Permission Controller” module. Since this feature is part of a Project Mainline module, Google could technically roll it out at the same time to Pixel and non-Pixel devices running Android 13, but they’re choosing to roll it out first to Pixel.

- 10-bit HDR video

- The Pixel 7 and Pixel 7 Pro support 10-bit HDR video capture, the first for a Pixel device. Previous Pixel devices used Google’s “HDRnet” algorithm to make 8-bit SDR video look like HDR video, but it wasn’t true 10-bit HDR. Here’s what Google had to say about the Pixel 7’s 10-bit HDR video during the launch event:

- “Tensor G2 powers lots of other video improvements, too, like new 10-bit HDR, so you can record brighter videos with wider color ranges and higher contrast. With 10-bit HDR, your videos look more like real life and utilize the full capabilities of Pixel 7 and Pixel 7 Pro’s amazing displays. We’ve even partnered with Snap, TikTok, and YouTube, so your HDR videos look vibrant when shared on your favorite apps.” - Shenaz Zack, Product Manager at Google on Pixel.

- Android, broadly, has two camera APIs available to applications: Camera2 and CameraX. Camera2 is the main camera API baked into the Android framework, while CameraX is a support library that runs on top of it. Full-fledged camera apps tend to use Camera2 because it offers more control and functionality, while apps that need the camera for secondary functionality tend to use CameraX because it’s simpler. (We talked a lot about Android’s camera APIs in this episode of the Android Bytes podcast if you’re interested in learning more.)

- In Android 13, Google extended the Camera2 API to include support for HDR video capture. Basically, OEMs (like Google) now have a standard way to tell apps using the Camera2 API (like Google Camera) that their devices support HDR video capture. This both requires an image sensor capable of 10-bit HDR as well as functions within the camera driver and hardware abstraction layer (HAL) to expose that functionality to Android. While it’s unclear exactly what format the Pixel 7’s 10-bit HDR mode will record in, display expert Dylan Raga speculates it’ll be HDR10 transcoded to HLG. The reason is that HLG supports SDR fallback, so Pixel 7 users wouldn’t need to upload two separate videos (a HDR and a SDR version) to social media for users without an HDR display. The Android developer documentation states that devices that expose 10-bit HDR support have to at least support HLG10, so there’s that.

- Other OEMs have had 10-bit HDR video for a while now, so why is Google only now adding API support to Android and shipping it on a Pixel? The likely reason they’ve held off so long is because before Android 13, Android lacked support for a feature called SDR dimming. Here's how Google explains SDR dimming:

- “When HDR content is on screen, the screen brightness is increased to accommodate the increased luminance range of the HDR content. Any SDR content that is also on screen is seamlessly dimmed as the screen brightness increases so that the perceptual brightness of the SDR content doesn't change.”

- I wrote a more thorough explanation of SDR dimming in this earlier edition of Android Dessert Bites if you want to learn some of the science behind it. This AOSP document confirms the SDR dimming feature made it into Android 13, but it’s worth noting that it requires OEMs to do some work to implement it.

Those are the Pixel 7 features backed by Android 13 APIs that Google announced during the Made by Google launch event yesterday, but as I mentioned earlier, I’ll also talk about features that Google didn’t announce. Some of these will be available at launch, while others may arrive in a future Pixel Feature Drop.

- Screen resolution settings

- The Pixel 7 has a 6.3-inch display at FHD+ (1080x2400) resolution, while the Pixel 7 Pro has a 6.7-inch display at QHD+ (1440x3120) resolution. For battery savings, Pixel 7 Pro owners should be able to go to Settings > Display > Screen resolution and choose the “high resolution” option to switch the resolution to FHD. This should result in modest power savings during day-to-day use but could result in greater power savings when the GPU is under intense load (like during gaming). The “screen resolution” settings page is new to Android 13, but it only appears if there’s both a FHD and a QHD display mode exposed to Android. The Pixel 7’s display driver lists two FHD screen modes, suggesting the panel offers native support for that resolution.

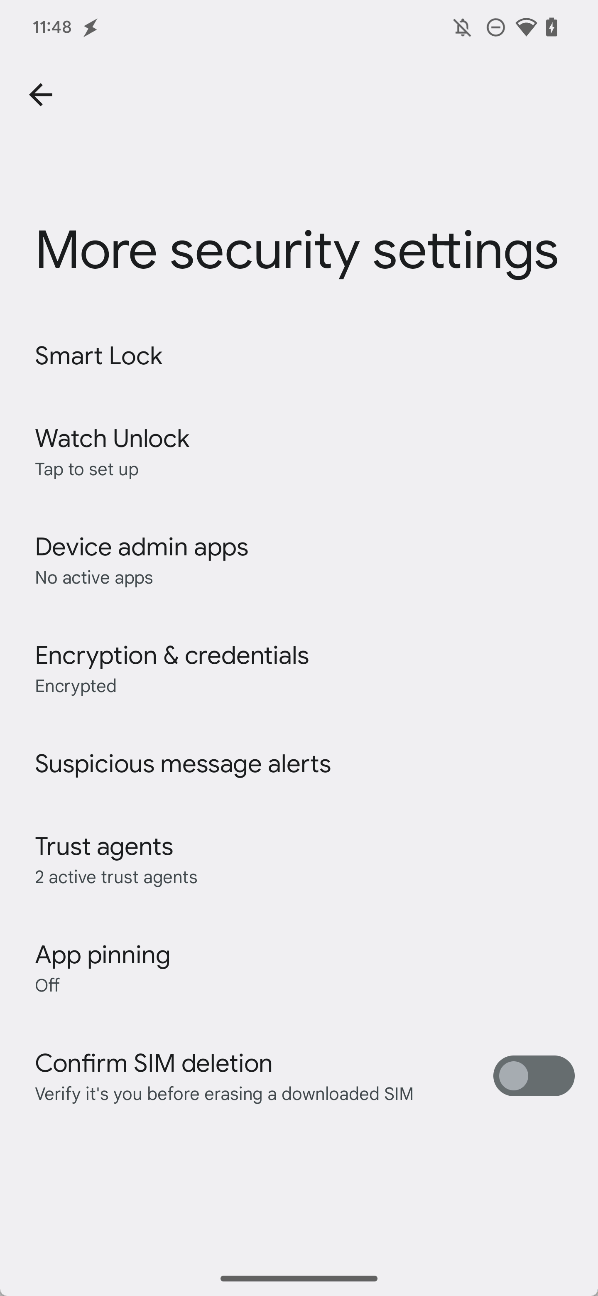

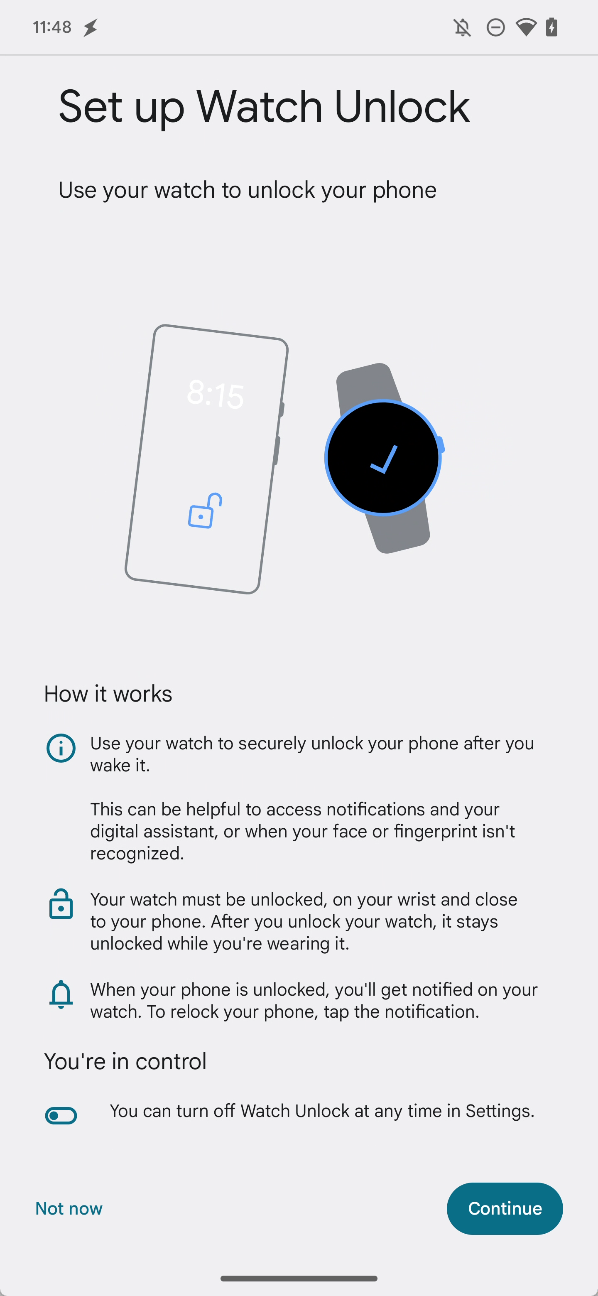

- Watch Unlock

- One of the handiest things about having a smartwatch is that it lets you keep your Android phone unlocked when they’re connected. You can do this by adding the smartwatch as a “trusted device” under Smart Lock, a service within the Google Play Services app. It uses Android’s underlying Trust Agent API to extend how long the device is kept unlocked. The problem with setting a smartwatch as a “trusted device” is that it doesn’t have to be worn or unlocked to unlock your phone — it just has to be connected to it via Bluetooth.

- To solve this, Google introduced the Active Unlock API in Android 13. This works in conjunction with the Pixel 7’s face unlock feature to make it so biometric authentication errors — which are more likely to happen as a result of face unlock being a “Class 1” biometric — won’t necessarily mean you have to enter your PIN, pattern, or password to unlock your device.

- Instead, if you have the Google Pixel Watch and set up Watch Unlock — Google’s name for the feature that uses this new functionality — it can “securely unlock your phone after you wake it.” The setup wizard for Watch Unlock notes that this “can be helpful to access notifications and your digital assistant, or when your face or fingerprint isn’t recognized.” SystemUI notifies Google Play Services of biometric failures through new settings values. For Watch Unlock to work, “your watch must be unlocked, on your wrist and close to your phone.”

- Dual eSIM support

- The Google Play Console listings for the Pixel 7 and Pixel 7 Pro reveal they declare the ‘android.hardware.telephony.euicc.mep’ feature, which indicates they support multiple enabled profiles on an eSIM. eSIM MEP is a new feature in Android 13 that lets devices simultaneously enable two SIM profiles stored on a single eSIM chip. Pixel phones currently support dual SIM functionality by enabling one SIM profile stored on the removable nano SIM card and enabling another SIM profile stored on the built-in eSIM chip. On a future Pixel, Google could get rid of the physical SIM card slot and just ship a single eSIM chip, because that single eSIM chip would be enough for dual SIM. If you want to learn more about how this new technology works, check out this previous edition of Android Dessert Bites.

- 3D wallpapers

- Within the Pixel’s “Wallpaper & style” app is code for a new “3D wallpapers” feature that will “make your photo a 3D wallpaper that moves when your phone moves.” This makes use of Android 13’s new WallpaperEffects API. Apps pass a request to the system-defined wallpaper effects generation service to apply a cinematic effect to the wallpaper. In the Pixel’s case, the wallpaper effects generation service should be contained within the Android System Intelligence, which will take your photo and turn it into a cinematic live wallpaper.

There are a couple of other Android 13-backed features to look forward to on the Pixel 7, including the Game Dashboard powered by GMS, Bluetooth LE Audio support, spatial audio with head tracking support, and Chromebook messaging app streaming using new virtual display APIs, but these aren’t exclusive to or launching first on the Pixel 7, so I’ve excluded them here. You can read more about them in my Android 13 changelog article if you’re curious.

Android 13 APIs that hint at Pixel Tablet features

As a bonus, here are some features I speculate will be available on the Google Pixel Tablet. There are several APIs in Android 13 that seem tailor-made for tablets, so there’s a good chance these APIs will power some of the Pixel Tablet software features.

- Revamped screen savers with complications

- This one is almost a certainty, but Android 13 is revamping the years-old screen saver feature to include complications. Complications are overlaid on top of the screen saver and include things like air quality info, weather, time, date, and details of a Google Cast session. Since the Pixel Tablet will be docked a lot of the time, Google is working to make sure the docked UI looks good and shows useful information.

- Custom clock

- In a similar vein, the “Wallpaper & style” app hints that you’ll be able to pick a “custom clock”. It isn’t clear what kind of clock customization will be offered, but the feature seems to be tied to the 3D wallpapers effect I mentioned earlier.

- Hub mode

- In the earliest Android 13 developer previews, there were hints at a “hub mode” feature that would let users share apps between profiles on the lock screen. The idea was to allow family members, each of whom have their own profile on a tablet, to access certain apps from the lock screen without having to switch profiles. However, this feature may have been abandoned, as all code related to “hub mode” was removed from Android 13 prior to release.

- Cross device calling

- Imagine you get a call on your phone but it’s in another room and you’re lazing on your couch on a Sunday. If you’re wearing a smartwatch, you could answer the call. If not, you’d have to get up and grab your phone. Cross device calling offers an alternative: answer your phone call from your tablet. New APIs in Android 13 enable calls to be forwarded from a smartphone to a tablet or other device. This could be automatic (a call ringing on your phone shows up as a notification on your tablet) or it could be manual (you select your tablet from the output picker to move the call over). At least, that’s how I think it could work.

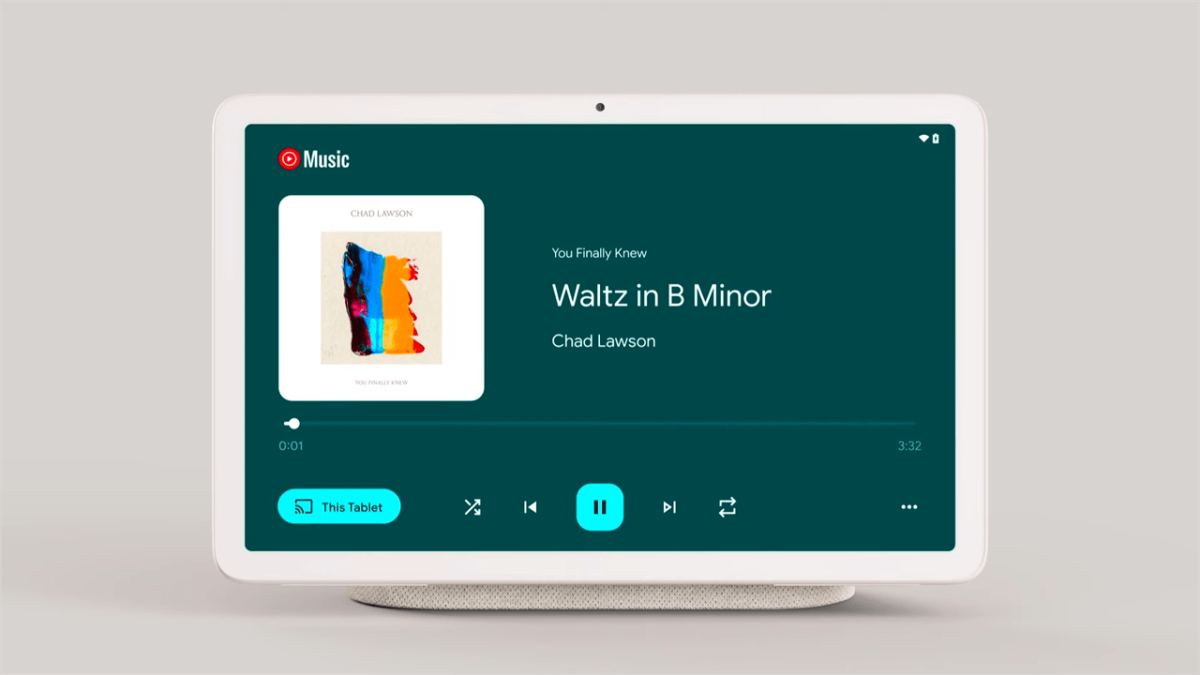

- Media Tap To Transfer

- Speaking of transfers, there’s a feature in Android 13 named “Media Tap To Transfer” that, despite its name, doesn’t actually depend on anything being tapped. Android 13 has some new SystemUI elements that facilitate the transfer of media from a “sender” device to a “receiver” device. For example, if you’re playing an album on Spotify on your Pixel 7, you could transfer this over to the Pixel Tablet which has a much louder speaker. The actual transfer isn’t handled by the OS itself but rather by a third-party app, which could be Google Play Services. Also, the transfer doesn’t have to be initiated via NFC contact (ie. a tap) — it could be Bluetooth, Ultra wideband, or some other protocol.

- Docked charging

- Under the hood, Android 13 revamps a lot of power and battery-related code to add support for docking. A new version of the USB HAL adds support for limiting power transfer from the USB port, which could be used to control charging when the Pixel Tablet is docked. There’s also a function to enable USB data transfer while the device is docked, which would enable transferring files or debugging the tablet while it’s docked. The HAL also adds support for USB digital audio docks, which is likely how the Pixel Tablet plays audio through the dock’s speaker when the tablet is docked.

- Low light clock

- Android 13 has a new “low light clock” screen saver that simply shows a large text clock in the middle of the screen. This is designed to be shown when the tablet is docked and there isn’t a lot of ambient light. This mimics the behavior of the Nest Hub when you turn off the light in a room.

- Kids mode

- There’s a special “kids mode” for the navigation bar that tweaks the back and home icons, hides the recents overview button, and keeps the nav bar visible at all times. This feature makes sense for the Pixel Tablet which is intended to be shared among multiple users at home, including kids.

- Stylus handwriting

- The Pixel Tablet will support USI styluses, so Google is pushing developers to add stylus handwriting support for their apps on Android 13. Android 13 lets keyboard apps declare support for stylus input and adds a developer option that lets the keyboard app receive stylus MotionEvent if text input is focused. The Chrome app, for example, has a flag that makes it so the keyboard app is opened to its stylus handwriting mode when tapping a text field with the stylus.

Those are the Android 13 APIs that I believe will power some Pixel Tablet features, but since the Pixel Tablet is launching in 2023, it’s too early to say which of these features will definitely make it in. Some of these features have a good chance of being added since they implement features seen on previous Nest Hubs, while others just seem like they’d be sensible additions given the direction Google’s going with this product.

While the Pixel brand may not sell as well as other smartphone brands, Pixel has an outsized influence on the Android platform owing to Google’s position as both an OEM and the developer of Android. As I’ve shown in this article, every Android release introduces several new APIs that seem to be designed for new Pixel features. The vast majority of new APIs and changes in each Android release aren’t related to the next Pixel phone, of course, but a surprising number are.

Though it’s fun to document what’s new in Android to feed speculation about what Google may be working on, the real benefit of documenting these changes is that they can be used by modders or other OEMs to build their own Pixel-like features. Usually what’s missing from AOSP is some ML model that Google trained or an app that implements a service in some way.

You could, for example, theoretically replicate the Pixel 7’s cough and snore detection if you created a detection service within an app that uses Android 13’s Ambient Context provider API and shipped it as part of your own ROM. It’d be a huge challenge, of course, but it’d be possible. You could also (theoretically) create your own 3D wallpapers by hooking up your own wallpaper effects generation service to Android 13’s WallpaperEffects API. Want to Rickroll spam callers? You could hook into the AOSP telephony APIs Google built for its call screen feature, like developer Pierre-Hugues Husson actually did.

I don’t know if many OEMs will use these APIs in the same way that Google did, or at all. The possibility is there, though, and since these APIs are part of AOSP, they’re worth covering even if they’re made for Pixel-proprietary features.